AI Search Reputation Risk: An AEO Strategy Priority for 2026

AI search can flatten years of brand work into one wrong answer — why reputation control is a 2026 AEO strategy priority across ChatGPT, Google, and Perplexity.

A strong brand used to be a buffer. If your website ranked, your reviews were solid, and your press coverage was clean, search generally reflected that reality.

That buffer is weaker now.

AI search engines do not present your brand as a list of sources. They compress dozens of signals into one answer. That answer may be accurate, sloppy, outdated, or flat-out wrong, but it is often the first thing a prospect sees. In practice, that means your reputation is no longer shaped only by your website, your review profile, or your PR. It is also shaped by how ChatGPT, Google AI Overviews, Perplexity, and similar systems summarize you.

That makes AI reputation a marketing problem, not just a PR problem.

Search Engine Land made the point clearly this week in its piece on AI search reputation risk: AI platforms pool sources, weight some signals more than others, compress nuance, and then reinforce the resulting narrative as those answers get shared and repeated. Reuters has also highlighted the same underlying issue in a broader way, noting that hallucinations remain one of the serious weaknesses in the AI business model, even as adoption accelerates (Reuters).

If you run marketing for a healthcare group, SaaS company, law firm, treatment center, or local service business, you need to start auditing what AI says about your brand the same way you audit rankings, reviews, and conversion paths.

What Changed: Search Engines Now Summarize Before They Refer

Traditional search gave users a stack of options. They still formed opinions, but they formed them by clicking through multiple sources.

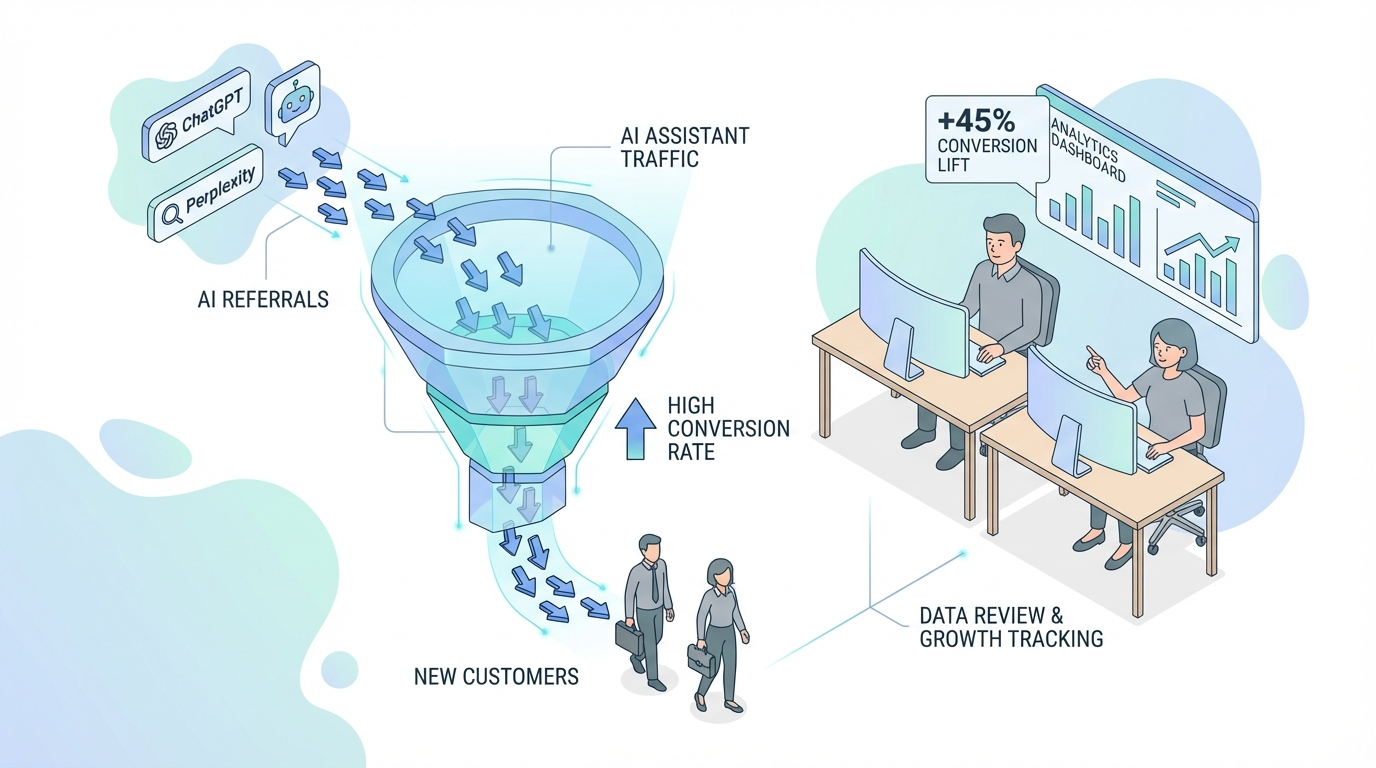

AI search changes that flow. The summary comes first. The click, if it happens at all, comes second.

That shift matters because the first impression is no longer entirely under your control. A prospect can ask:

- Is this company trustworthy?

- What do customers say about this brand?

- Is this rehab center legitimate?

- Is this software worth the price?

- What are the problems with this provider?

And they may get back a polished summary that blends reviews, Reddit threads, old blog posts, forum complaints, your own site copy, and random third-party mentions into one clean paragraph.

The danger is not only that AI might get something wrong. The danger is that it might get the tone wrong. It might over-weight an old complaint thread. It might frame an edge case as the main story. It might merge two similar brands. It might confidently describe your business using stale or incomplete information.

That is why the standard SEO logic of “if we rank, we’re fine” is no longer enough.

The Real Risk Is Narrative Compression

The most useful idea in the Search Engine Land article is narrative compression.

AI systems do not simply retrieve facts. They compress competing facts into a narrative. Once that narrative exists, users tend to treat it as the default interpretation.

For marketers, that creates three problems.

1. Nuance gets stripped out

A complex reputation can collapse into a single sentence like, “Users report mixed trustworthiness” or “The company has concerns around service quality.” That sentence may technically come from real inputs while still being deeply misleading.

2. Old issues can stay alive longer than they should

A complaint from years ago, a temporary customer service issue, or a one-off controversy can keep resurfacing if the content around it is still easy for language models to find and summarize.

3. Repetition starts to look like truth

Once a summary gets screenshotted, quoted, referenced in posts, or repeated by users, it creates new supporting material for the same claim. The loop feeds itself.

This is why AI reputation management cannot wait until there is a crisis. By the time leadership notices a problem, the negative framing may already be showing up across several answer engines.

Why This Sits With Marketing, Not Just PR

PR teams matter here, but marketing owns more of the inputs than most companies realize.

Marketing controls or influences:

- The website pages AI crawlers can cite

- The structure and clarity of service pages

- FAQ content and schema

- Case studies, bios, and author pages

- Third-party content placement and partnerships

- Review generation systems

- Brand consistency across platforms

- The topics you publish on and the evidence you attach to claims

In other words, marketing is responsible for much of the source material AI uses to decide what your brand is.

That is why this has become part of answer engine optimization. AEO is not only about getting cited for informational queries. It is also about making sure the machine-readable version of your company is accurate, credible, and hard to distort.

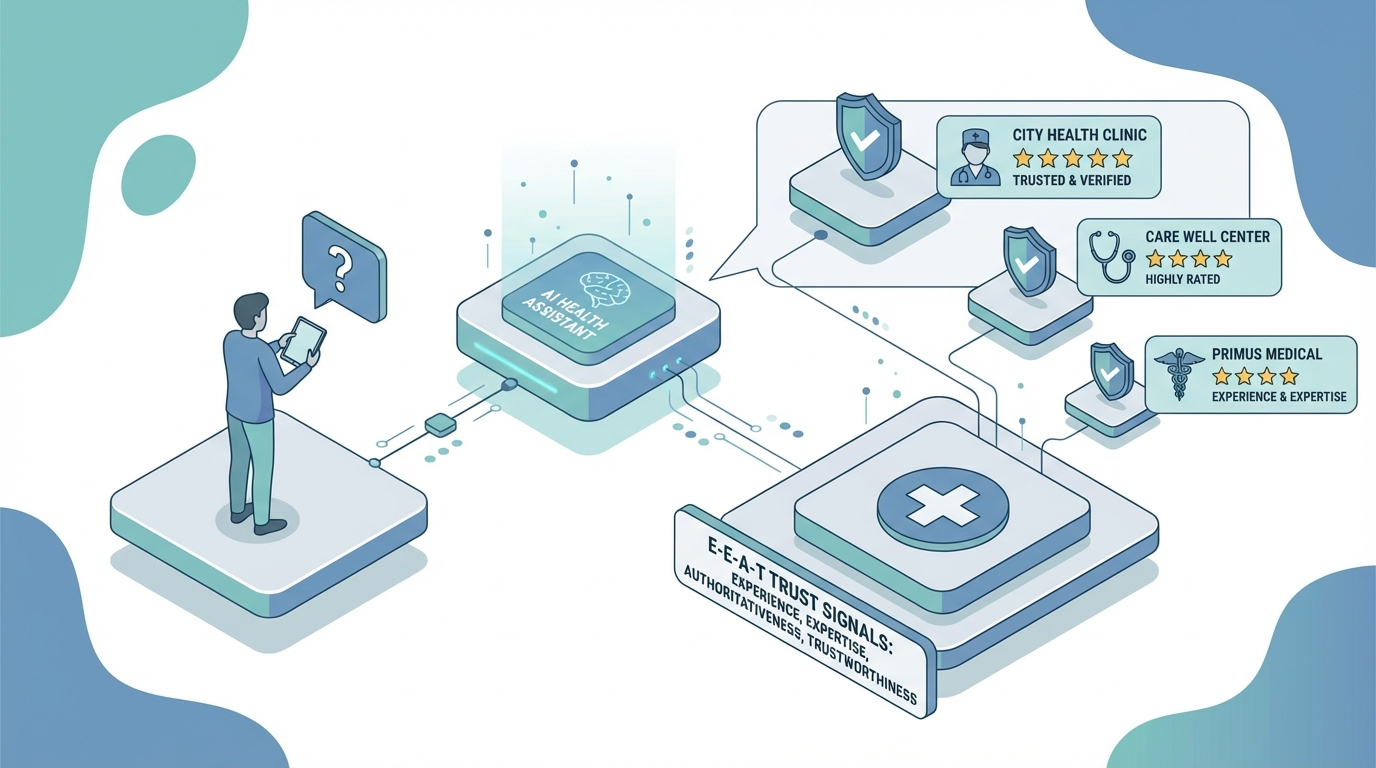

This is especially important in higher-trust categories. In healthcare, legal, finance, and B2B software, people increasingly use AI for early-stage vetting. They ask reputation questions before they ever fill out a form.

At Emarketed, we’ve seen how AI visibility shifts when the inputs improve. Seasons in Malibu grew AI mentions from 49 to 122 and increased cited pages from 122 to 190 while maintaining strong authority across core treatment terms. That kind of footprint matters because it gives AI systems more high-trust material to work with when forming a summary of the brand.

If the machine has five weak or inconsistent sources, it improvises. If it has fifty strong, aligned sources, it has much less room to drift.

How to Audit Your Brand’s AI Reputation

Most teams do not need a giant software stack to start. They need a repeatable process.

Start with the exact prompts buyers would use when they are trying to rule you in or out.

Examples:

- Is [brand] trustworthy?

- What do customers say about [brand]?

- Is [brand] legitimate?

- What are the pros and cons of [brand]?

- Best alternatives to [brand]

- Is [brand] good for [specific use case]?

Run those prompts across ChatGPT, Google AI Overviews, Perplexity, and Gemini. Capture the outputs. Save screenshots. Note the recurring claims. Then identify the sources the model appears to rely on.

You are looking for four things:

Claim accuracy

Are the facts correct? Products, services, locations, leadership, pricing model, outcomes, certifications, and positioning should all be checked.

Tone and framing

Even if the answer is technically accurate, is the overall narrative skewed negative, generic, or incomplete?

Source quality

Are strong sources driving the answer, or is the model leaning on weak third-party chatter, old directories, and forum posts?

Coverage gaps

What high-intent questions produce thin or confused answers? Those gaps usually point to missing content on your site or missing corroboration off-site.

If you want a structured workflow, this is where a tool like Emarketed’s AI Search Optimizer helps. The point is not vanity tracking. The point is catching narrative drift before it becomes a sales problem.

How to Fix a Bad AI Narrative

Once you identify a bad summary, the instinct is often to publish a defensive statement. That rarely solves the underlying issue.

The better move is to improve the source environment around the claim.

Publish stronger first-party proof

Create pages that answer sensitive trust questions directly. This may include:

- Clear leadership and expert bios

- Updated service and product pages

- Transparent pricing or pricing logic where appropriate

- Outcomes and case studies

- Review and testimonial pages

- FAQs that address objections in plain language

Tighten entity consistency

Make sure your brand name, descriptions, locations, specialties, and differentiators are consistent across your website, directories, social profiles, and third-party mentions. Inconsistent entity data gives AI systems permission to improvise.

Build supporting citations off-site

If AI keeps leaning on weak sources, you need better external corroboration. That might include earned media, podcast appearances, expert contributions, partner mentions, review platform improvements, and thought leadership content on sites that already carry trust.

Update or outrank stale pages

Old pages often survive because nobody bothered to replace them with something stronger. If the web still points to an outdated version of your brand, AI will keep finding it.

Monitor for repetition

G2 recently called out an important measurement shift: teams should track not just AI visibility, but signals like sentiment, hallucination rates, correction rates, and message pull-through in AI answers (G2). That is the right mindset. You are not just measuring mention volume. You are measuring whether the model is describing your company correctly.

What Good AEO Looks Like for Reputation Defense

A lot of brands still think AEO is mainly about blog posts built to chase citations. That is only half the job.

Good AEO for reputation defense means:

- Your core pages are structured clearly and answer real buyer questions fast

- Your expertise is visible through authorship, bios, and proof

- Your most important claims are backed by data, examples, and external references

- Your site architecture makes it easy for AI systems to understand what you do and who you serve

- Your off-site footprint reinforces the same story your site tells

That is why this work tends to overlap with AEO strategy and broader authority building, not just traditional ORM.

The brands that will do best over the next year are not the ones with the prettiest statements after a problem appears. They are the ones feeding answer engines a cleaner, richer, more defensible version of the truth before the problem starts.

FAQ: AI Search Reputation Risk

Can AI really hurt a brand even if the website and reviews are strong?

Yes. AI systems summarize from multiple inputs, not just your website and primary review platforms. A strong site can still be outweighed by stale or noisy third-party content if the model treats it as relevant.

Is this mainly a problem for big brands?

No. Mid-market and niche brands are often more exposed because they have fewer high-authority sources defining them online. When there is less trustworthy material to work with, AI has more room to distort the picture.

What should marketers check first?

Start with reputation-focused prompts in the major answer engines. Capture outputs for trust, reviews, legitimacy, controversy, alternatives, and category-specific concerns. Then compare the answers against what your buyers should actually learn about the brand.

How often should we audit AI answers about our company?

Monthly is a good baseline for most companies. Weekly makes sense if you are in a sensitive category, actively running PR, or recovering from a reputation issue.

Is fixing AI reputation mostly a technical task?

No. Technical cleanup matters, but the larger job is editorial and strategic. You need better inputs: clearer pages, stronger proof, tighter entity consistency, and more authoritative citations.

Does this replace SEO?

No. It expands it. SEO still matters, but rankings alone no longer protect your brand narrative. You need to manage how search engines and answer engines describe you, not just whether they list you.

What to Do This Week

Run an AI reputation audit on your own brand.

Ask five trust-based questions in ChatGPT, Google AI Overviews, and Perplexity. Save the outputs. Highlight anything outdated, vague, or wrong. Then trace those claims back to the sources feeding them.

If the machine version of your company feels thinner, harsher, or less accurate than the real one, do not treat that as an AI quirk. Treat it as a marketing issue with revenue consequences.

That is the new standard: not just ranking well, but being summarized well.