OpenClaw LA #3 Recap: Memory Systems, Local Models, and the Rise of Agent Marketplaces

Our third OpenClaw LA meetup went deep on memory architecture, token economics, and orchestration-first agent design. Here's everything that was covered.

Last night we held our third OpenClaw LA meetup, and this one hit different. The room was packed, the energy was high, and the presentations went deep on problems that every serious OpenClaw user is wrestling with right now: how to manage memory, how to stop burning through tokens, and how to actually structure your agents so they don’t trip over each other.

Huge thanks to Michael and Amy Dermody from Colab Marketing Group for sponsoring the drinks, and to Miguel from Cactus Face for catering the food. Miguel’s recipes come from his late mother, who brought them from Oaxaca. Hands down some of the best Mexican food I’ve had in 51 years of living in LA.

The Main Session: Andrew Peltekci & Justin Walker

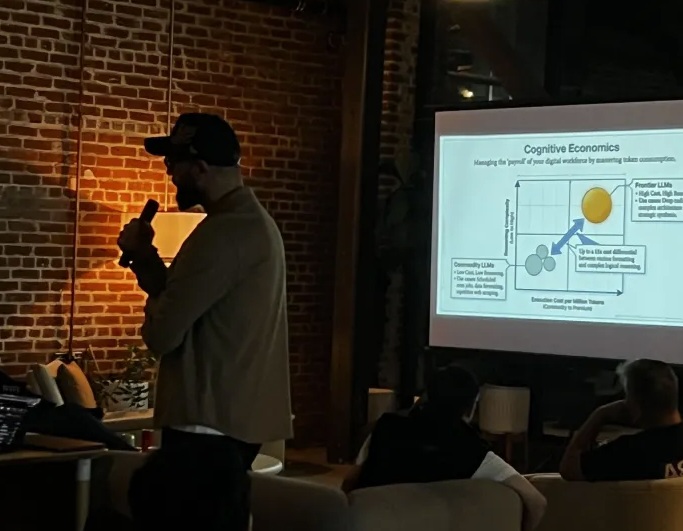

Andrew and Justin from Cofounded kicked things off with a session on OpenClaw best practices, and they didn’t hold back. These two are power users. By their own count, they’re burning through about 1.5 billion tokens per week between the two of them. That number alone tells you how seriously they’re pushing these systems.

Think of it like a company org chart. Andrew’s core framework for thinking about OpenClaw is to treat it like an organizational hierarchy. You’ve got your CEO (a master orchestrator agent), your VPs (specialized agents for coding, planning, analysis), and your workers (sub-agents that handle individual tasks). When you separate the workload this way instead of cramming everything into one agent session, you start getting parallel results instead of bottlenecked, sequential ones.

Token economics are going to get worse. Both speakers made the point that LLM providers are giving us subsidized rates right now. They shared a real-world example: a custody advocate OpenClaw instance they built for someone going through a contentious custody battle. The system ingested a thousand pages of court-ordered communication history, generated over 150 documents, and helped prepare a full declaration filing. Three days of work. $350 in Claude API tokens. It was worth it for that specific case (far cheaper than lawyers), but that kind of spend isn’t sustainable for ongoing use.

Local models are closer than you think. Their answer to the token cost problem is offloading work to local open-source models. They spun up Qwen 3.5 (35 billion parameters) on a Ryzen 5 mini PC with 32GB of RAM and no dedicated GPU. No fancy hardware. And that model performs on par with the best frontier models from three to six months ago. Their argument: you don’t need the latest iPhone if last year’s model still does the job.

Memory is the single biggest differentiator. This was the part of the talk that resonated most with the room. By default, OpenClaw uses a flat file system for memory, which works fine for small tasks but falls apart at scale. Both Andrew and Justin have invested heavily in knowledge graph-based memory systems. Justin uses Mem0 (an open-source memory layer), while Andrew built his own multi-agent memory system from scratch, using four types of memory modeled after how the human brain stores and recalls information, complete with a 24-hour decay cycle.

The knowledge graph layer is what takes memory from “I remember this fact” to “I understand how this fact relates to everything else.” Without it, your agent can recall that you told it something six months ago, but it has no idea how that connects to what you’re working on today.

Orchestration-first behavior changes everything. Justin demonstrated how most people run their OpenClaw the wrong way: every task happens in the main session, the agent goes quiet while it processes, and you can’t do anything else until it finishes. The fix is to enforce orchestration-first behavior, where the main session only handles your direct conversation. All real work gets delegated to sub-agents (either permanent specialized ones or temporary ephemeral ones). Justin compared ephemeral agents to handing a post-it note to an intern: you scope the task, include only the context they need, and let them go. No reason to load your entire life history into the context window just to build a command center.

Build a command center. Justin showed off his “Aurora Mission Control,” a custom dashboard that gives him visibility into everything his agents are doing: Kanban task boards, active agent status, running processes, GitHub PRs, cron jobs, skills, and a knowledge graph visualizer. He shared prompts for building your own at cofounded.io/prompts, and recommended this order: (1) set up orchestration-first behavior, (2) build your command center, (3) integrate Mem0.

Core file management matters more than people realize. One detail that caught the room off guard: OpenClaw’s identity and soul files have a hard limit of 20,000 characters. The system keeps adding to these files, but when it reads them, it truncates at 20,000. So if your important behavioral instructions get pushed past that cutoff, your agent just stops following them. Justin set up skills that monitor file size and prune aggressively as they approach the limit, while keeping backup copies of the full uncompressed files in case the pruning goes too far.

Security is non-negotiable. Both speakers were emphatic: never expose your OpenClaw to a public endpoint. Hackers run automated tools that crawl for open endpoints, and they will find yours. Use something like Tailscale (a mesh VPN) so only authenticated devices on your network can access it. The early wave of people getting compromised happened because they skipped this step.

Lightning Round: 5-Minute Demos

After the main session, we ran a series of quick demos from builders in the community.

Daniel May (X) brought the perspective of a veteran software engineer (formerly at Riot Games) who’s been running local inference at home. He walked through his journey of buying GPUs off Craigslist (which turned into an unexpectedly sketchy adventure in a warehouse district), getting them running with ROCm, and now hosting Qwen 3 (22 billion parameters) locally with about 144K context. His bigger point was about software abstraction: the reason non-technical people are building ambitious things with AI right now isn’t because they’re smarter, it’s because they don’t carry the baggage of knowing how things “should” work. He also raised a sharp concern about hosted inference, pointing out that when you route everything through OpenAI or Anthropic, you’re accepting their content policies, their rate limits, and their model deprecation schedules as constraints on your own product.

Tyler Bittner (GitHub) shared lessons from building a multi-agent system that discovers and scores tech product opportunities by processing newsletters, evaluating models, and aligning findings with his career history and interests. His biggest takeaway: stop trying to force traditional software engineering patterns onto agent workflows. He initially tried imposing a rigid pipeline orchestration system on top of what was already working, and the quality dropped. The agent already knows how to manage context and sessions. Let it.

Lex Dreitser (VR Generalist) showed a creative workflow for using OpenClaw with Unreal Engine, UEFN, and Roblox, and the whole thing costs zero tokens. His setup uses a bare-bones OpenClaw installation with a single skill (Claw Cursor) that lets the agent control his desktop directly, including mouse and keyboard. That means it can operate Cursor (the AI code editor), browse the web, open applications, and interact with Unreal Engine’s UI, all without paying for search APIs or extra integrations. He’s also building a MetaHuman-based voice-to-face system using NVIDIA’s tech that will let you talk to your OpenClaw agent through a realistic human face. Expected release around July, and it’ll be a free skill.

Phil Mannle pitched Cliver, an agent-to-agent marketplace he’s building that’s essentially a cross between OpenClaw and Fiverr. The idea: you build a skill (say, an amazing TikTok video pipeline), break it into composable pieces, and list those pieces on a marketplace where other agents can hire your agent to perform them. Your OpenClaw logs in through an MPC server, creates a gig, sets its own price, and handles client interactions through a chat interface. The marketplace provides API access to services like RunPod, Hugging Face, and Gemini at cost, taking a percentage of completed gigs instead of charging for API access. It’s not live yet but the architecture is mapped out and working locally.

David Abilez (Praxis) introduced Praxis, a curated AI tool search engine with the top 250 AI tools indexed. You search by task, and it returns the five most relevant tools with reasoning for why each fits your specific needs. He’s planning to roll out an ecosystem feature that lets users sign into tools like Claude, ChatGPT, and others directly from the platform.

Archie (Instagram) gave a real-time demo of his OpenClaw instance, which he names his agents after members of the Wu-Tang Clan. RZA handles long-term strategy, Method Man is the lead programmer, and new agents get added as the workload grows. He showed his mission control dashboard with a Kanban board, agent status tracking, daily accomplishment logs, and a calendar synced to his Gmail. He’s also tracking his bot’s full configuration in Git, so if it catastrophically breaks, he can roll back to a known good state. Smart move.

What’s Next

OpenClaw LA #4 is already on the calendar. If you want to present at the next one or are interested in sponsoring, visit OpenClaw LA. We’re also running AI in Production that same week.

The space is moving fast. Three events in and the conversations have shifted from “what is this thing” to “here’s how I architected my memory system and why yours is probably leaking context.” That’s progress.

See you at the next one.