Agentic Engine Optimization Is Real. Most Marketing Content Is Not Ready.

Google's latest agentic content guidance changes how marketers should structure pages for AI systems, citations, and high-intent discovery in 2026.

Marketing teams finally got a blunt explanation of why so much content underperforms in AI search, even when it ranks decently in Google. On April 15, Search Engine Land reported on new guidance from Google Cloud AI director Addy Osmani: AI agents do not browse like humans. They extract. They truncate. They skip. And when a page makes them work too hard, they move on.

That sounds technical, but the business impact is simple. If your page hides the answer, buries the useful details under fluff, or forces the model to wade through navigation noise and vague copy, you lose the chance to be used, cited, or clicked.

This is the next content split in search. Human-friendly writing still matters. Traditional SEO still matters. But now your page also has to perform for machine readers that are impatient, token-limited, and increasingly responsible for the recommendation before the click.

Most agency content is not built for that.

What changed this week, and why it matters

Osmani’s framework was framed as Agentic Engine Optimization, a separate idea from the answer engine optimization most marketers already talk about. The naming is messy. The signal is not.

The useful part of the guidance is this: AI systems often make decisions under context-window limits. If a page is too long, too noisy, or too poorly structured, the model may never reach the part that actually answers the question. According to the Search Engine Land writeup, the practical recommendations were to put the answer early, keep pages focused, and reduce unnecessary layout and markup clutter where possible.

That lines up with what marketers are already seeing in live AI behavior. Semrush’s new clickstream analysis found that ChatGPT referral traffic grew 206% in 2025, even while the platform used live web search on just 34.5% of queries as of February 2026. In other words, the web still matters, but the model is selective about when it reaches for it.

That is the tension. Fewer queries may trigger live retrieval than many teams assume, but when retrieval does happen, the page has to be easy to parse fast. You are not competing for endless chances. You are competing for a short list of moments when the model decides your content is worth using.

Stop writing pages like the reader will patiently scroll forever

A lot of content marketing still assumes the visitor will tolerate a slow ramp.

Paragraph one sets the scene. Paragraph two broadens the trend. Paragraph three repeats the promise. Paragraph four finally starts answering the question. That structure was already weak for featured snippets. It is worse for AI systems.

Agentic consumption punishes throat-clearing. If the answer is not visible near the top of the page, the model may extract a competitor’s cleaner paragraph instead. If your heading says one thing and the body wanders into generic filler, your page becomes harder to trust as a source block.

This is one reason healthcare marketers need to pay especially close attention. In regulated and high-trust categories, clarity matters twice. DexCare’s April guidance for health system marketers notes that AI Overviews can push clickthrough rate down from 1.6% to 0.6% when they appear, citing Seer Interactive data reported in Healthcare Brew. If clicks are already getting compressed, your page has to win at the answer layer before the visit.

That means:

- lead with the answer, not the setup

- use headings that map to real questions

- keep each section focused on one job

- cut repetitive intros and empty transitions

- make proof easy to find, not hidden in the back half of the article

This is not about writing shorter for the sake of it. It is about making the most valuable information available early, so both humans and models can understand what the page is for.

The new content stack is answer first, proof second, expansion third

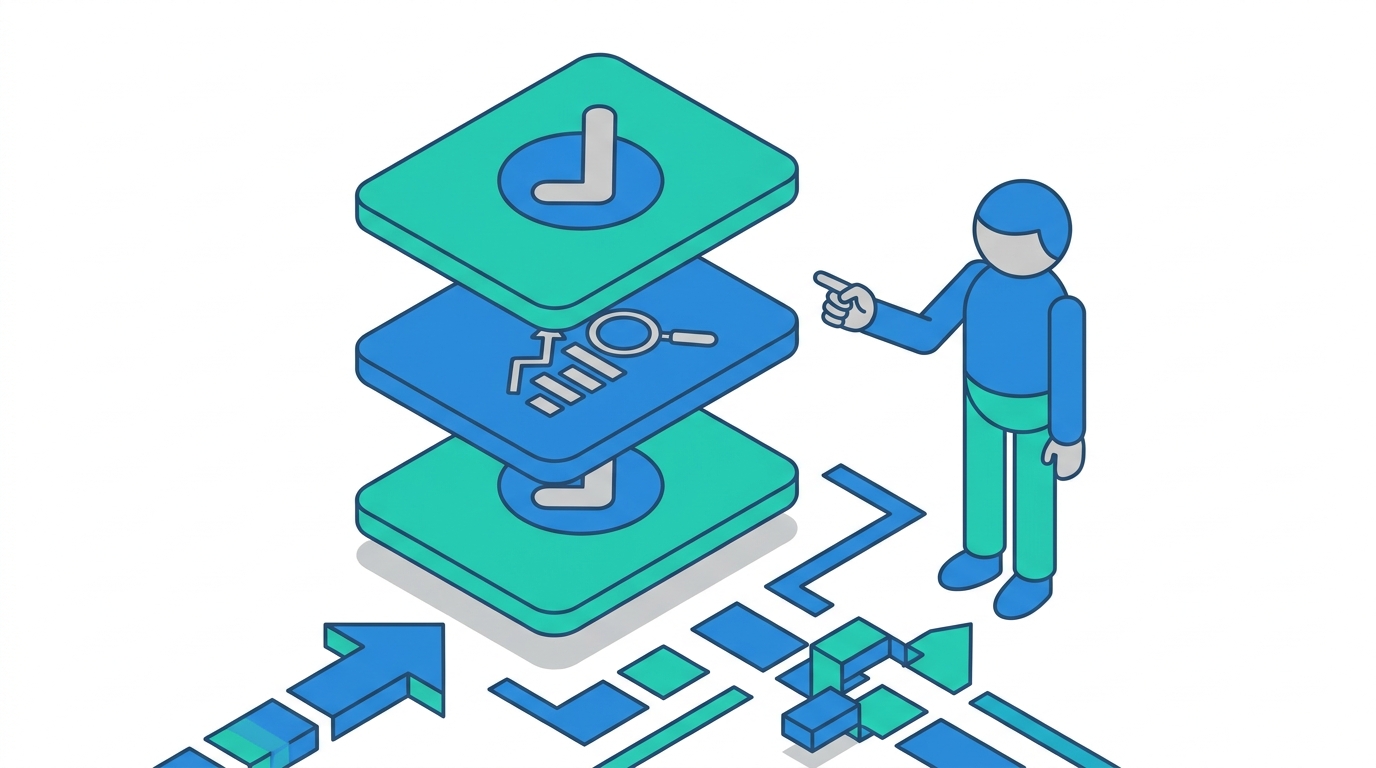

The easiest way to think about agentic-ready content is to separate it into layers.

Layer 1: The direct answer

The top of the page should give a clean, specific answer in plain language. Not a teaser. Not a long preamble. The answer.

If someone asks, “What is agentic engine optimization?” the page should define it immediately. If the query is “How do I make service pages easier for AI tools to cite?” the first section should give the operational answer immediately.

Layer 2: The proof

Once the answer is clear, show why it should be trusted. This is where statistics, named sources, case examples, screenshots, and original observations matter.

A good example is the gap between AI usage and web referral behavior. Semrush’s analysis shows ChatGPT has plateaued around 1 billion monthly visits while outbound referrals to the web still grew sharply. That tells marketers something important: these systems are not replacing the web completely, but they are becoming the control layer in front of it.

Layer 3: The deeper expansion

After the answer and proof come the details for serious readers. This is where examples, tradeoffs, implementation notes, and objections belong. Long-form content still works. It just needs a better top half.

That structure is also more usable for AI systems. A model can grab the direct answer, verify it against the proof, and still find deeper context if needed. That is a much healthier citation environment than a bloated article with 800 words of scene-setting before the first concrete claim.

Why this matters for agencies right now

Agencies are about to get squeezed from two directions.

First, clients are seeing traffic patterns change and want explanations that go beyond rankings. Second, AI systems are changing what kind of content gets surfaced in the first place.

If your agency still produces pages that are technically optimized but structurally vague, you will feel this on both sides. The page may rank reasonably, but it may fail to earn citations, AI mentions, or high-intent visits. Then the client sees fewer qualified outcomes and starts asking what the content program is actually doing.

This is why I think agentic structure is not a niche technical tweak. It is a delivery problem for every agency offering SEO, content, AEO, or healthcare marketing.

We have already seen the practical version of this on Emarketed accounts. Seasons in Malibu holds 4,200+ keyword rankings and 814,230 monthly social impressions. At the same time, their AI mentions climbed from 49 to 122. That is not a random vanity metric. It reflects what happens when a brand becomes easier for search systems and AI answers to reference across the decision journey.

The lesson is not “write for bots.” The lesson is that clarity compounds across channels. A page that explains a topic cleanly is more useful to patients, more useful to search engines, and more usable inside AI-generated answers.

What to change on your site this month

If you want a practical playbook instead of another trend essay, start here.

1. Rewrite your first 500 tokens on key pages

Pick your top service pages, category pages, and highest-value blog posts. Read only the first 500 tokens, roughly the top few paragraphs. Ask one question: if an AI system stopped reading here, would it still understand the core answer?

If the answer is no, rewrite it.

For most pages, this means moving the clear definition, offer, or takeaway much higher. It also means cutting vague brand language that sounds polished but says very little.

2. Break pages into extractable sections

Each H2 should answer a specific sub-question. Each section should be able to stand on its own.

This helps humans scan. It also helps models isolate the part of the page they need. A strong section title is often the difference between being extractable and being ignored.

If you need a quick way to pressure-test this, paste only your headings into a doc. If they do not tell a coherent story by themselves, the page structure probably needs work. You can also use Emarketed’s AI Search Optimizer to benchmark whether your current pages are actually showing up across answer engines.

3. Cut decorative copy that does not carry information

A lot of agency pages are full of phrases that sound reassuring but provide no usable detail. AI systems do not care that you are “results-driven” or “customer-centric.” They care whether the page clearly states what you do, for whom, why it matters, and what evidence supports the claim.

This is one place where healthcare content has an advantage when done well. Swaay.Health’s interview with Envision Health stressed the same point in plain language: no marketing babble, no mumbo jumbo, just clear explanations patients can actually understand.

That is good writing advice. It is also good machine-readability advice.

4. Add proof close to the claim

Do not make the reader hunt for the evidence. If you claim AI search is changing traffic behavior, cite the source right there. If you claim a client improved AI visibility, show the number near the claim.

Proximity matters. It helps readers trust the point immediately. It also helps extraction systems connect the statement to its supporting evidence.

5. Treat service pages like answer assets, not brochures

This is a big one.

Many service pages are written like sales collateral. They talk about process, values, and generic benefits but never become the best answer to a real category question. That is a missed opportunity.

A strong service page should still sell, but it should also answer the core questions someone would ask in Google, ChatGPT, or Perplexity before contacting you. If you want a better model, study pages that combine positioning with genuine explanation, like solid category pages in /services/ or educational resource pages that solve a real problem.

Do not confuse machine-readable with machine-only

There is a bad version of this trend already forming.

Some teams hear “agents need structured content” and decide the solution is to publish stripped-down junk pages built only for machines. That is the wrong takeaway.

Even the Search Engine Land coverage noted the tension here. Google Search does not use llms.txt as a ranking input, and John Mueller has already pushed back on the idea that separate markdown-only pages are the answer. The goal is not to build a shadow web for crawlers. The goal is to make your real content easier to understand.

That means the winning format is usually not “more pages.” It is better pages.

Clearer openings. Better headings. Less filler. Stronger evidence. More explicit use cases. Cleaner page intent.

If your current content is dense, repetitive, or abstract, those fixes will help regardless of whether the visitor is a person, a search engine, or an AI retrieval layer.

The bigger shift: AI systems are becoming the pre-click editor

This is the part many marketers still underestimate.

The old search model gave your page a chance to make its case after the click. The new model often makes a preliminary decision before the click ever happens. An AI system summarizes your page, compares it to others, and decides whether your content deserves a citation, a recommendation, or nothing at all.

That turns page structure into a visibility issue, not just a UX issue.

It also changes how agencies should explain content value to clients. The content program is no longer just trying to win rankings and sessions. It is trying to become the source material that AI systems trust enough to use.

That is a more demanding standard. It is also a more defensible one.

Teams that adapt now will build cleaner content libraries, stronger service pages, and more durable citation visibility. Teams that keep publishing bloated, vague, SEO-by-template pages will keep wondering why their traffic story and their business results are drifting apart.

FAQ

What is agentic engine optimization?

Agentic engine optimization is the practice of structuring content so AI agents can parse, understand, and act on it efficiently. In practical terms, it means clearer openings, tighter sections, less fluff, and more explicit answers near the top of the page.

Is agentic engine optimization the same as answer engine optimization?

No. They overlap, but they are not identical. Answer engine optimization focuses on getting cited or surfaced in AI answers. Agentic engine optimization focuses more broadly on making content usable for AI systems that retrieve, summarize, and act on information.

Does this replace SEO?

No. Traditional SEO is still the foundation. Strong crawlability, internal linking, backlinks, and page quality still matter. What changed is that ranking alone is no longer enough. The page also has to be easy for AI systems to consume.

How long should pages be for AI search?

There is no perfect word count. The better question is whether the answer appears early and whether each section earns its place. Long pages can still work well if they are tightly structured and easy to extract from.

Should brands create separate markdown pages for AI tools?

Usually, no. The safer move is to improve the structure and clarity of the main page rather than spin up duplicate machine-only versions. Separate formats may help in some documentation contexts, but most marketing teams should focus on making their core pages better first.

What should marketers do first after reading this?

Audit the first screen and first 500 tokens of your most valuable pages. If the answer is buried, move it up. If the headings are vague, rewrite them. If the page sounds polished but empty, replace generic copy with specifics and proof.

The smart next move for most teams is not a full rebuild. It is a ruthless rewrite of the top section on the pages that matter most.